Search-Supervised Deep Learning

Training and Deploying Neural Connect 4 Agents

A full-stack ML system that trains neural networks on MCTS self-play data and deploys them as real-time interactive bots via AWS Lightsail and Anvil. CNN and hybrid CNN-Transformer architectures achieve 81.7% Top-1 accuracy without tree search at inference.

- Project Focus

- Game AI, Supervised Learning, Cloud Deployment, Architecture Comparison

- Training Stack

- Python, TensorFlow/Keras, NumPy, MCTS, Google Colab

- Deployment

- AWS Lightsail, Docker, Anvil (Python web app)

- Dataset

- ~40,000 board states from 1,500 MCTS self-play games

System Architecture

This project was architected as a production-style ML pipeline rather than a standalone notebook. Clear separation of responsibilities mirrors real-world deployment patterns.

MCTS Self-Play → Dataset Generation → Model Training (Colab GPU) → Model Serialization (.h5) → Dockerized Inference API (AWS Lightsail) → Anvil Frontend (Authenticated UI) → Human vs Bot Gameplay

| Layer | Responsibility |

|---|---|

| Training | Model development and experimentation |

| AWS Backend | Stateless inference only |

| Docker | Environment reproducibility |

| Anvil Frontend | Authentication, UI, state management |

Data Generation

Rather than hand-labeling positions, MCTS was used as a high-quality move generator. The pipeline ran 1,500 self-play games with 1,200 rollouts per move over ~15 hours. Randomized early moves increased diversity; duplicate board states were consolidated via majority vote.

Model Results

| Metric | CNN | Hybrid Transformer |

|---|---|---|

| Top-1 Accuracy | 78.3% | 81.7% |

| Top-2 Accuracy | 92.4% | 94.1% |

| Inference Time | 1.2 ms | 3.8 ms |

| Training Time | ~20 min | ~2 hours |

| Best Use Case | Real-time, lightweight deployment | Maximum move prediction accuracy |

The hybrid model improves top-1 accuracy by 3.4 points. The CNN trades a small accuracy drop for 3x faster inference and 6x faster training, making it ideal for real-time deployment.

Architecture Journey

CNN: Iterative Refinement

Initial models exposed classic failure modes: shallow CNN underfit (~60%), deep CNN overfit, heavy regularization caused capacity collapse. The final CNN balanced depth and generalization with progressive convolution blocks (32 → 256 filters), batch normalization, dropout scheduling, and Global Average Pooling.

Pure Transformer

Performance plateaued at 46–55% accuracy. The 6×7 board is too small for effective token diversity, and transformers lack inductive spatial bias. Conclusion: transformers without feature extraction underperform on compact spatial domains.

Hybrid CNN–Transformer

CNN feature extractor compresses to 3×3 spatial tokens, then a 4-layer Transformer encoder and dense classification head. This combined spatial priors with global attention for the best overall accuracy.

Tactical Error Analysis

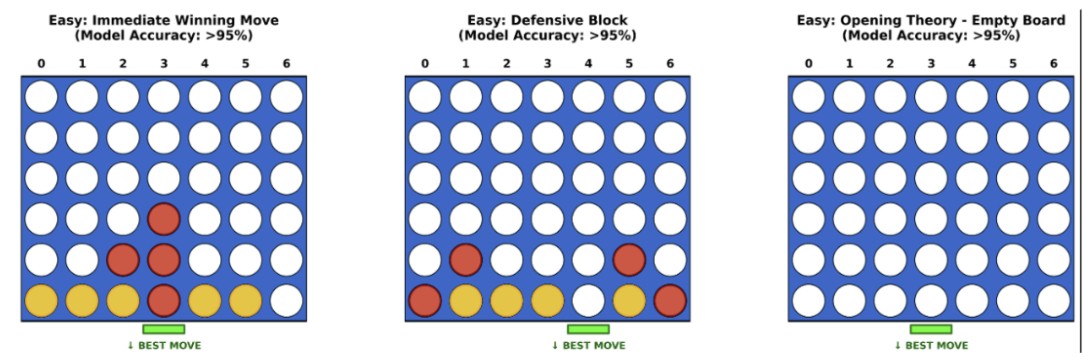

High Confidence (>95%)

- •Immediate wins: 98%+ accuracy

- •Forced defensive blocks

- •Opening central control

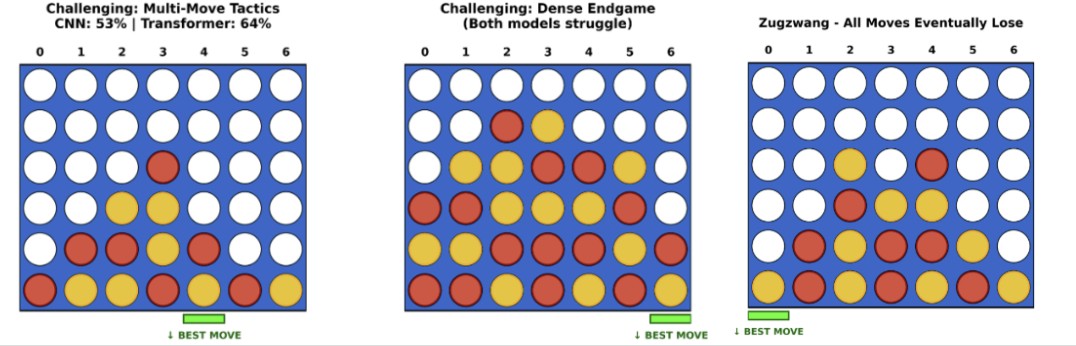

Failure Modes (<65%)

- •Multi-move traps

- •Dense endgames

- •Zugzwang states

Neural networks approximate pattern recognition but cannot simulate future branches. This reflects the historical evolution from supervised AlphaGo to AlphaZero-style policy + search hybrids.

Deployment

AWS Lightsail Backend

- •Dockerized TensorFlow inference service

- •Model loaded at startup, stateless requests

- •Returns probability distribution over 7 moves

- •Deterministic, low-latency inference (<4 ms)

Anvil Frontend

- •Authenticated UI (email/password, no auto signup)

- •Model selector (CNN vs Transformer)

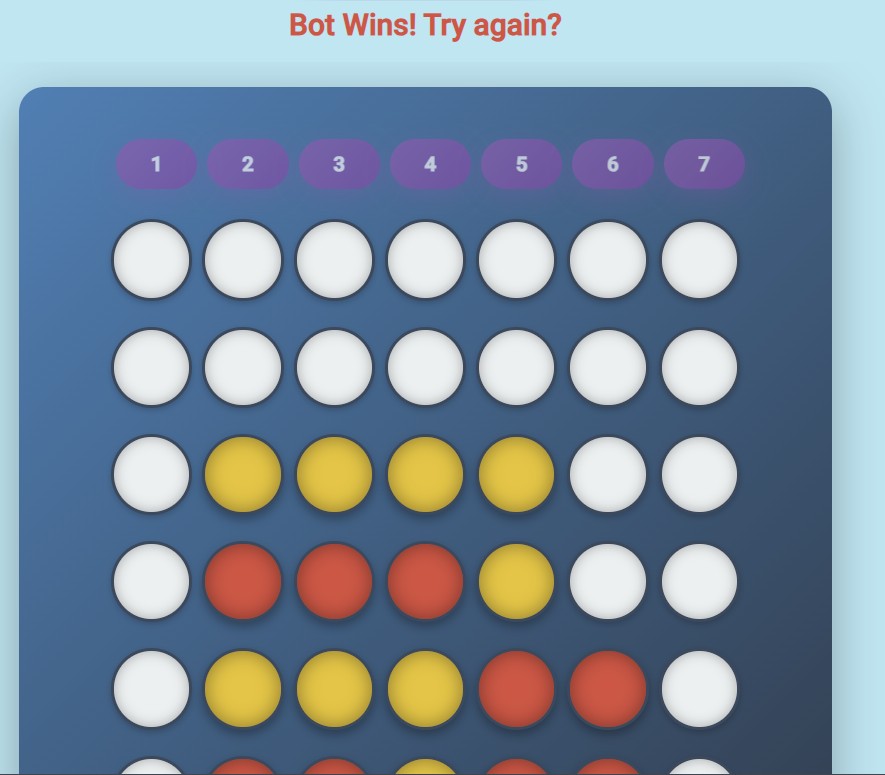

- •Real-time human vs bot gameplay

- •Tab navigation: Play Game | Training Description

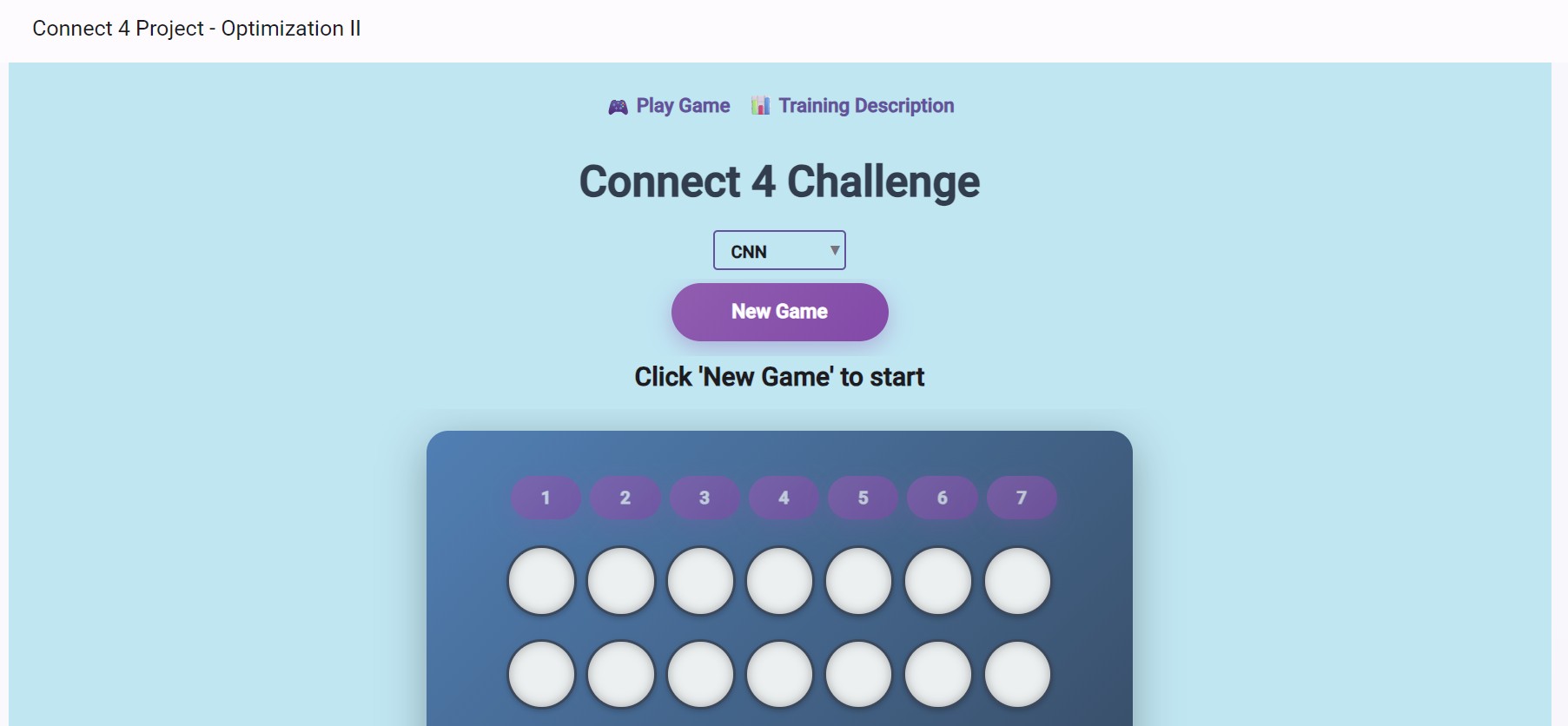

Anvil UI

The frontend was designed to resemble a polished consumer product: gradient background, rounded board container, elevated shadows, distinct yellow/red piece styling, animated feedback messages.

Game interface with model selector and board

Interactive gameplay view

Model Performance Visualization

High-confidence board states where models excel

Challenging scenarios (multi-move traps, endgames)

Engineering Tradeoffs

| Dimension | CNN | Hybrid Transformer |

|---|---|---|

| Accuracy | Strong | Best |

| Latency | Excellent | Moderate |

| Training Cost | Low | High |

| Implementation Complexity | Moderate | High |

Both models were deployed to allow direct comparison in the live Anvil app.

Key Takeaways

- • Inductive bias matters more than model novelty; small spatial grids favor CNNs

- • Transformers benefit from hybridization with spatial feature extractors

- • Supervised imitation from MCTS labels has inherent planning limits; neural-guided search (AlphaZero-style) is the natural next step

- • Production deployment adds non-trivial engineering overhead: containerization, cloud hosting, authenticated UI

Future Improvements

Outcome

The final system achieves 81.7% Top-1 move prediction accuracy, avoids catastrophic tactical blunders, runs inference in under 4 ms, supports authenticated interactive gameplay, and is fully containerized and cloud-hosted. It reflects production-ready ML system design with measurable outcomes across search supervision, architecture experimentation, regularization tuning, Docker containerization, cloud deployment, and frontend integration.