MSBA Capstone — Diligence

Property Insights Engine

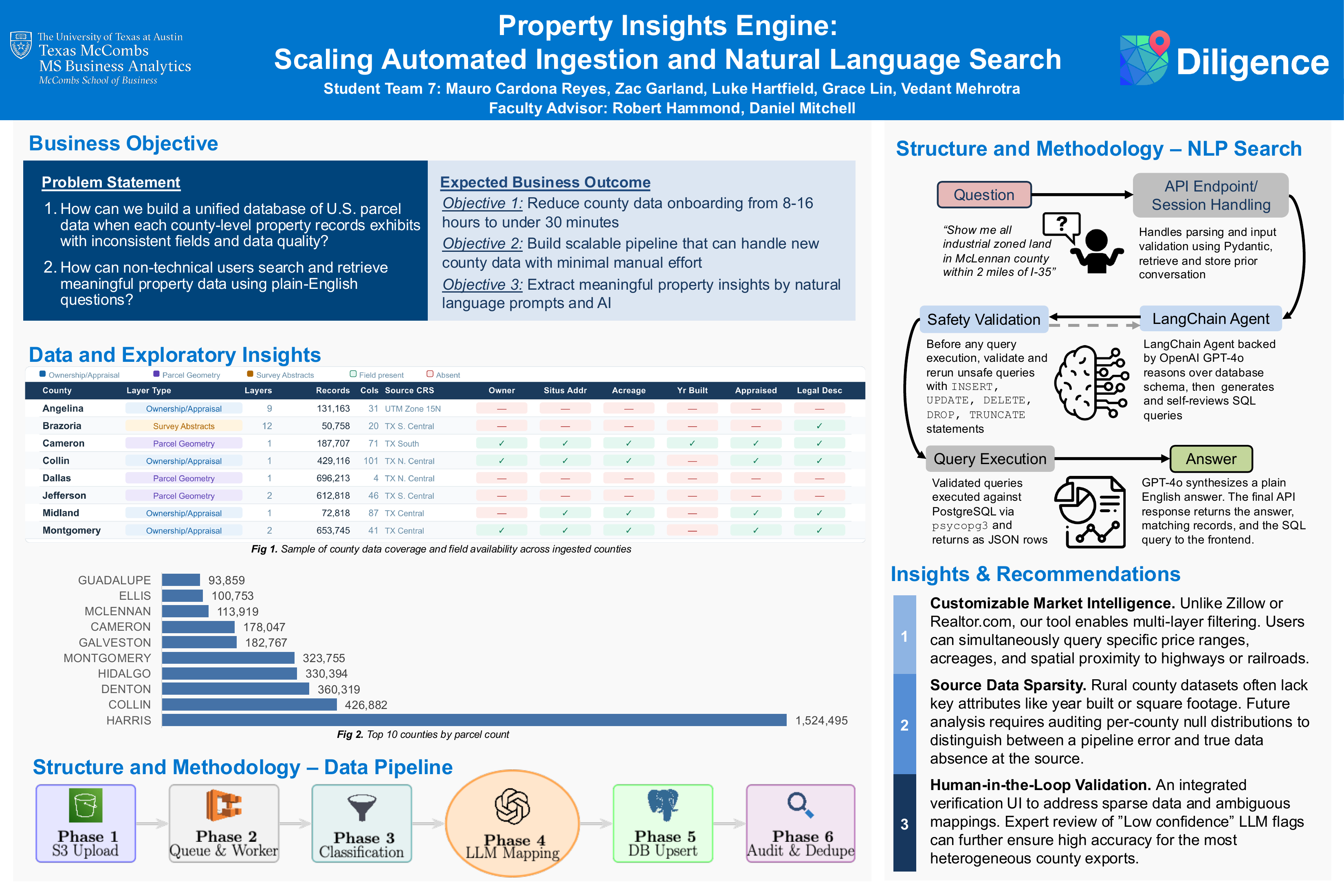

Scaling automated ingestion and natural language search for a parcel intelligence platform — reducing per-county onboarding from 8–16 hours of manual work to under 30 minutes of automated processing.

- Context

- McCombs MSBA Capstone, Spring 2026 — Group 7

- Sponsor

- Diligence — full-service parcel intelligence platform

- Pipeline Stack

- Python, AWS S3 + ECS, PostgreSQL/PostGIS, OpenAI, boto3, GeoPandas, psycopg3

- Search Stack

- LangChain, GPT-4o, FastAPI, Next.js, PostgreSQL

Project Poster

The Problem

U.S. property records are maintained at the county level with no standardization — inconsistent column names, file formats (CSV, shapefile, GeoJSON), and data quality across 3,000+ counties. Diligence's existing MVP required 8–16 hours of manual data engineering per county to ingest and normalize, making nationwide scale economically infeasible without proportional headcount growth.

A secondary barrier was accessibility: extracting insights required writing SQL against a schema that varied county-by-county, limiting the platform to technical users.

Two Parallel Workstreams

Automated Data Pipeline

LLM-assisted, queue-driven ingestion that normalizes heterogeneous county parcel data into a unified PostgreSQL schema — without county-specific code.

NLP Search Interface

LangChain + GPT-4o SQL agent that translates plain-English property queries into validated, read-only SQL — with multi-turn conversation support.

Pipeline Architecture — 6 Phases

The pipeline is queue-driven and event-triggered: a new file upload to S3 automatically enqueues a job and starts an ECS worker — no manual operator intervention required.

S3 as Source of Truth

County data (ZIP, shapefile, GeoJSON, CSV) uploaded to S3. File arrival handler detects county from path and writes job to queue.

Queue-Driven Worker Orchestration

ECS worker polls on a 1-minute interval, claims one county job at a time. Successful jobs are removed; failures are retained for retry without blocking the rest of the batch.

Archive Reading & File Classification

Recursively unpacks archives. LLM evaluates each candidate file — road centerlines, flood zones, and subdivision boundaries are rejected before any DB operations begin.

LLM-Assisted Column Mapping

GPT-4o receives source column names + sample values and maps them to the target schema. Temperature=0 for deterministic output. A field named ACREAGE, Acres, legal_acre, or TP_AREA all resolve correctly.

Chunked Idempotent Upsert

Records processed in 2,000-row chunks. Keyed on (prop_id, county) — safe to re-run on the same file. Geometry reprojected to WGS84; only Polygon/MultiPolygon types retained.

Audit Logging & ETag Deduplication

ETag hash checked before processing. Unchanged files are skipped entirely — no download, no upsert. Partial progress logged to CloudWatch even on job failure.

NLP Search — 8 Stages

Users submit free-text questions through the Next.js map interface. The agent inspects the live database schema, writes SQL, self-reviews it, passes it through a deterministic read-only safety validator, executes it, and returns a plain-English answer — all in a single request. Conversation history is maintained across turns so users can refine without restating context.

Safety Guarantee

A rule-based validator rejects any SQL containing INSERT, UPDATE, DELETE, DROP, or TRUNCATE before execution — independent of the LLM. Cannot be bypassed through prompt manipulation.

Live Schema Introspection

The agent reads the actual database schema on each request rather than a hardcoded description. New columns are automatically available without any prompt updates.

Self-Review Step

After generating SQL, the agent re-reads its own query via sql_db_query_checker to catch logical or syntactic errors before execution, reducing retry loops.

Multi-Turn Sessions

Up to 20 prior turns are prepended to each prompt. Follow-up questions correctly reference prior context; sessions are isolated so concurrent users don't affect each other.

Example queries handled: acreage threshold filters, ownership lookups, county-level aggregates, multi-turn refinement ("now filter to parcels over 5 acres").

Results

(from 8–16 hrs)

per county

savings at 200 counties

processed

Validation — Rockwall County

The pipeline evaluated four layers in the Rockwall County GIS archive. Two were correctly ingested (parcel ownership polygons), two were correctly rejected (road centerlines, subdivision boundaries) — with no manual intervention required at any stage.

| Layer | Geometry | Outcome |

|---|---|---|

| rockwall_ownership | Polygon | Ingested |

| rockwall_parcels | Polygon | Ingested |

| rockwall_roads | LineString | Rejected |

| rockwall_subdivisions | Polygon | Rejected |

Tech Stack

Pipeline

NLP Search

Capstone Presentation

Group 7 with the Diligence sponsor after the final presentation

Outcome

The system delivers a fully automated, county-agnostic ingestion pipeline with no county-specific code — any new county is added by uploading data to S3 and reviewing one generated JSON mapping file. Paired with an NLP search interface that removes the SQL barrier for non-technical users, the platform is production-ready for phased national rollout. Conservative projections estimate $160,000–$220,000 in annual labor savings at 200 counties, with additional ROI from accelerated deal sourcing and earlier geographic coverage.